In a messaging experience not far away…

…and actually as close as your pocket, there’s a chatbot ready to help you with any issue that you might be having with your Amazon.com order.

Here at Helpshift, we know a thing or two about chatbots. Our customers have been using Helpshift chatbots to improve the support experience for billions around the world. We’re taking that knowledge out into the field with this new blog series: Bot Wars. Over the next few months, we’ll be conducting a comprehensive review of three of the most popular chatbots that are being used today by top brands to deliver (hopefully) exceptional customer service.

Why are we doing this? While there certainly are prominent brands using chatbots, many are still mulling over whether or not they want to implement them. One of the best ways to get a sense of what you’re looking for is to see how others have succeeded (or failed). Our hope with this series is that you’ll start to get a sense of what a great chatbot experience looks like, so you can decide with confidence if chatbots are a good option for your customer support organization.

1. Overview

We chose Amazon as the first chatbot to subject to our review because of the sheer scale of its support organization. Amazon employs over 70 thousand customer service agents. They help millions of Amazon customers every day and are located in over 32 countries, helping customers who speak dozens of languages.

As we’ve seen with our own customers, chatbots can be an effective way to dramatically scale customer support without the need to add agents. But of course, that benefit can only be realized if the chatbots are implemented properly — giving customers information and self-serve options that deflect tickets so agents have more time to focus on customers who truly need one on one support.

Amazon provides bot support via its messaging channel, which is accessed via its website or on the Amazon mobile app. When seeking support, customers must first submit a query within Amazon’s knowledge base. An option to ‘contact us’ is then provided on the knowledge base search results page, which directs to options for messaging or phone-based support.

Amazon’s messaging experience isn’t quite asynchronous. At Helpshift, we consider an asynchronous messaging experience to be one where you can leave and come back to the conversation at your leisure — so you can do a grocery run while you wait for an agent and jump back into the conversation when you get back.

With Amazon’s messaging experience, you can leave and come back and still see all of the previous interactions you’ve had with chatbots (although we did encounter some issues getting older messages to load and display). However, if you need help from an agent, the experience becomes session based. You have to stay in the messaging environment and complete the conversation then and there. Additionally, on the mobile app, responses from an agent don’t result in a push notification, reinforcing the need to keep the messaging session open.

To get the most out of chatbots and other forms of automation, we recommend using an asynchronous messaging environment.

The sections that follow will provide a summary of features within three broad categories and provide a score for each. Scores are out of 5, so a perfect score is 15. Here’s a scoring rubric for your reference:

| Scoring Rubric | |

| Criteria | Points |

| Awareness of context & proactivity | 5 |

| Ease of use & efficiency | 5 |

| Persona & Empathy | 5 |

| Total | 15 |

2. Awareness of context and proactivity

For chatbots to be helpful, they need to first understand the context of the customer’s issue and then proactively provide the customer with a path to resolution — whether that be by providing self-help resources or transferring to the right bot or agent that can help resolve the issue.

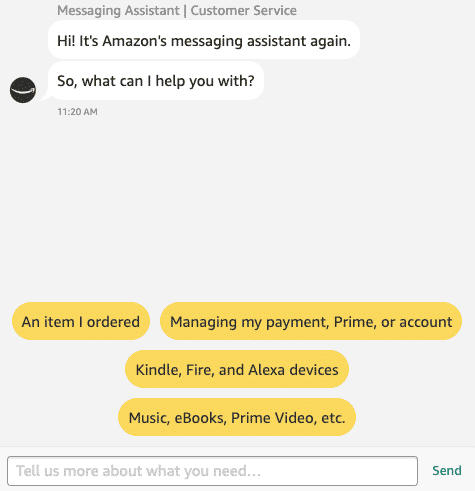

When first entering Amazon’s messaging experience, a bot immediately greets you and explains a bit about how you can interact with it. It also provides several options for the customer to tell it what their issue is about. Additionally, the customer can free-type to describe their issue, which is then analyzed by an AI to route their issue to the appropriate bot or agent.

We took the time to test out both options to see how Amazon’s bot performed.

The chatbot provided us with the following options upfront:

- An item I ordered

- Managing my payment, prime or account

- Kindle, Fire, and Alexa devices

- Music, Ebooks, Prime Video, Etc.

These options seem to cover the most common questions that customers ask. We selected ‘Kindle, Fire, and Alexa devices’. This brought us into a workflow that asked us about common issues and surfaced text that appeared to be from Amazon’s knowledge base to troubleshoot. The bot then escalates to an agent when we chose the option that we were still experiencing connection issues.

We also wanted to test Amazon’s AI capabilities by free-typing a few queries. These surfaced a few interesting results:

Query 1 – Where are my earplugs?

Asking this question, the bot immediately recognizes that this is a query related to a specific item previously ordered. In this case, a pair of reusable ear plugs (San Francisco can be noisy!). The bot immediately presents the earplugs order as a single option to select and then, once selected, the bot advises that the item was delivered on the 21st of August and then offers additional options to select. We also tested the query ‘where is my order?’ and were taken down the same path, although we were presented with more order options.

The fact that we were presented only with the earplugs order when specifically asking about them suggests that Amazon’s AI is smart enough to return specific orders based on the customer’s query.

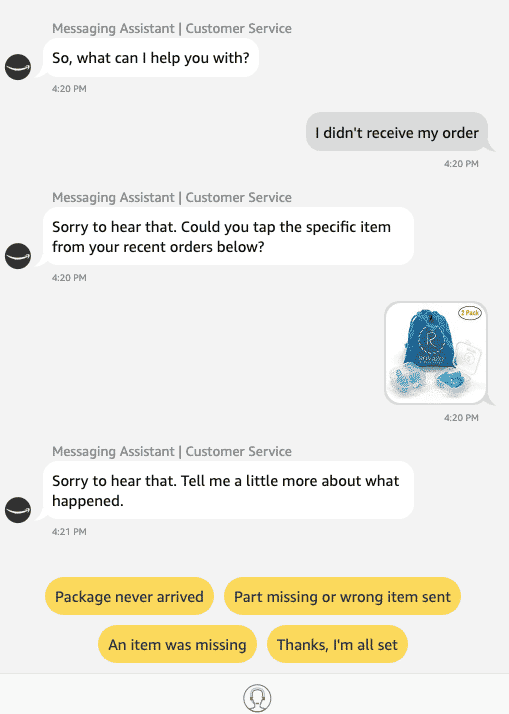

Query 2 – I didn’t receive my order

While this query seems like it’s similar to ‘where is my order?’ Amazon’s AI actually treated the query slightly differently, apparently understanding the slight nuance of asking ‘where’ to saying something wasn’t received. In this case, the bot returns options based on missing or damaged items. Ostensibly, this is because Amazon often packages different items together, so if a customer didn’t ‘receive’ an item it may be that it wasn’t in the box with other items.

Query 3 – I need a Christmas card for my cousin

We tested this query to see if Amazon’s bot would help with product suggestions. The short answer to this is no. Asking about Christmas cards surfaced options for gift cards. Similarly, asking about a beach umbrella immediately resulted in a transfer to an agent. Apparently, Amazon’s bot isn’t a fan of sun and sand.

Helpshift score: 5.0

Overall Amazon’s chatbot quickly understood our issue and proactively surfaced resources and options towards resolution. Typed queries were generally well understood, although not programmed to give product suggestions.

3. Ease of use & efficiency

Ultimately, chatbots are only useful if they’re easy for customers to use and can offer a more efficient experience compared to speaking directly with an agent. Beyond contextual understanding and proactivity, chatbots need to take a customer down a logical and seamless path to resolution — avoiding bottlenecks, delays or the dreaded frustration loop of ‘sorry, I don’t understand, can you rephrase your question?’ over and over again with no escape. In terms of efficiency, a good bot should waste as little of the customer’s time as possible by providing pertinent information up front without the customer having to dig around.

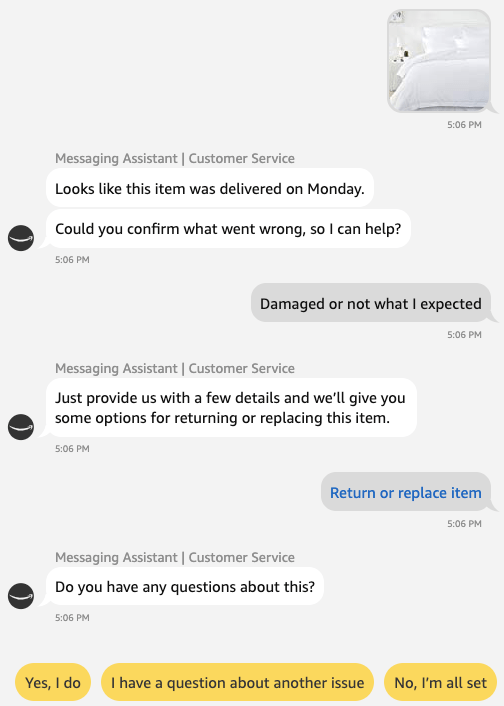

Luckily, a member of our team recently ordered a sheet set on Amazon but received the wrong color — a perfect opportunity to throw another test at Amazon’s chatbots.

We followed the same process as before with the earplugs and selected the option for ‘return or replace item’. This triggered the returns self-help page to open in a new tab. However, when we attempted to request an exchange we ran into a hiccup where we weren’t given an option to choose a different color — instead we were given the option to exchange for completely unrelated items.

This was not ideal, so we then went back to the messaging environment and selected the agent option, which was a nondescript icon at the bottom of the thread of a person wearing a headset. An agent came on-line within less than a minute and quickly let us know that, for reasons unknown, the item was not eligible for exchange. We were sent a return label via email and the team member was able to re-order the correct color sheets.

Overall, with both this and preceding examples, Amazon’s chatbot experience followed a logical flow that ultimately got us to a resolution. The bot and messaging environment also offer a few options for easy escalation to an agent, either by asking if more help is needed and providing corresponding options (No, that’s all; Yes, I have a different question), an agent icon at the bottom of the chatbox (although it comes and goes depending on the interaction) or by simply replying ‘agent’ to the bot.

Despite the overall ease of use, we did find it interesting that Amazon’s chatbot wasn’t able to retrieve information related to a specific product’s return or exchange restrictions. Had the chatbot known that the sheet set wasn’t eligible for exchange, it could have automatically provided this information along with a return label without the need for us to speak with an agent. This would have lead to a much more efficient support experience overall.

Other features that add to ease of use and efficiency include the aforementioned features, such as the ability (in most cases) to leave the chat and come back to it later, the ability to switch from desktop to mobile, and the ability to free-type queries.

One major feature that is important for improving ease of use and efficiency, but that was missing from Amazon’s chatbot experience, is feedback collection at the end of every interaction. Implementing this is as simple as programming a bot to collect feedback before a ticket is closed (How would you rate your support experience?).

Helpshift score: 4.0

The chatbot experience is generally easy and intuitive for customers to use. However, the bot could have provided additional information about returns and exchanges. Also, there was no option to provide feedback.

4. Persona & empathy

One aspect of chatbots that can make a big impact on the customer experience is their persona and ability to empathize with customers. Of course, chatbots are pre-programmed by human beings, so customer service teams need to make sure that they translate the brand voice and tone over to their chatbots in order to create a consistent experience for customers.

Chatbot personas can range from bland to silly — with some brands employing a mascot as their chatbot, such as an animal. Others try to pass their bot off as a real person, which we don’t recommend. Likewise, how a chatbot responds to customers can also vary, with some brands opting for witty replies while others prefer drier responses. Regardless of the persona and voice a brand chooses for its chatbots, at the very least they should be friendly and employ a helpful and empathetic tone of voice.

Amazon certainly opted for pragmatism here. Their chatbot doesn’t have a specific persona or name and simply refers to itself as a ‘messaging assistant’. Likewise, responses are plain but helpful with a conversational tone (“ok,” “so,” “sure,” “let’s see”). In terms of empathy, the chatbot did offer a light apology when we indicated items were missing or otherwise not what we expected.

Helpshift score – 5.0

While not cracking Dad jokes, Amazon’s chatbot is friendly, helpful and empathetic.

5. Verdict

Overall score – 14/15

We gave Amazon’s chatbot an overall score of 14 out of 15, which is outstanding when you consider that chatbot technology is still relatively new and many brands have struggled to implement them properly.

Here’s a quick breakdown:

| Score Breakdown | |

| Criteria | Score |

| Awareness of context & proactivity | 5 |

| Ease of use & efficiency | 4 |

| Persona & Empathy | 5 |

| Total | 14/15 |

Strengths – Amazon’s chatbot is ultimately a net positive to Amazon’s overall customer experience. If the point of a chatbot is to provide a better experience than a person for routine issues, than Amazon’s chatbot does just that. Options that were provided throughout the experience followed a logical flow towards resolution and the bot was generally proactive in providing pertinent information as well as an easy escalation path to an agent if needed.

Weaknesses – Currently, Amazon’s chatbots don’t provide product suggestions based on a customer’s query, which may be a missed opportunity. The chatbot also wasn’t able to retrieve information specific to a product that would have saved us time on our particular issue. The chatbot also doesn’t ask customers to provide feedback on their experiences.

We hope you’ve found this first Bot Wars battle useful in understanding what a great chatbot experience should look like. Stay tuned for the next installment of Bot Wars coming next month!