In a messaging experience not far away…

…and actually as close as your pocket, there’s a chatbot ready to help you with any issue that you might be having with your Capital One credit card.

Welcome back to Bot Wars, our blog series that reviews some of the most popular chatbots out in the market! If you missed the first edition on the Amazon.com chatbot, you can read it here. Otherwise, skip the recap and jump into this month’s review of the Capital One chatbot, Eno.

About Bot Wars

Here at Helpshift, we know a thing or two about chatbots. Our customers have been using Helpshift chatbots to improve the support experience for billions around the world. We’re taking that knowledge out into the field with this new blog series: Bot Wars.

Why are we doing this? While there certainly are prominent brands using chatbots, many are still mulling over whether or not they want to implement them. One of the best ways to get a sense of what you’re looking for is to see how others have succeeded (or failed). Our hope with this series is that you’ll start to get a sense of what a great chatbot experience looks like, so you can decide with confidence if chatbots are a good option for your customer support organization.

1. Overview

Chatbots and in-app messaging are particularly compelling technologies for retail banking. Beyond the benefits of streamlining the support experience, chatbots are also inherently more secure than speaking to a human about your finances, as this chilling ad from Barclays in the UK demonstrates. Not only are customers pre-authenticated by being logged in to the app (often using biometrics, such as a fingerprint or face scan), but offering support solely via an in-app messaging channel dramatically reduces the risk of phishing scams. If customers know that all communications from their bank will be within a secure in-app messaging environment, then they’ll know to approach communications received from other channels with suspicion.

While these security benefits are not lost on financial institutions — indeed, many banks have incorporated messaging and bots into their service offerings to various degrees — getting consumers to trust chatbots is a formidable challenge. It doesn’t matter if chatbots and messaging are more secure if consumers won’t adopt the technology.

One brand that has worked hard to develop a chatbot that their customers trust is Capital One. Their chatbot, Eno, made its debut in early 2017 as the banking industry’s only ‘gender-neutral’ AI assistant — a feature that apparently improves trust by removing the potential for gender bias in people’s interactions with it. The name ‘Eno’ is the ‘One’ in Capital One written backwards — no points deducted for lack of originality.

Currently, Eno is only available to Capital One Credit card holders. It can be accessed directly within the Capital One app or online. Beyond responding to customer questions, Eno can also send proactive notifications about account activity, provided the customer opts in for the service. Another interesting feature of Eno is a browser extension that enables customers to request a ‘virtual’ credit card number for increased security making online purchases — although the extension doesn’t allow you to actually talk to Eno.

Billed as an ‘AI assistant,’ Eno is a purely ‘conversational’ chatbot, meaning that it relies almost entirely on AI to generate its responses. This means that Eno ‘reads’ each message it receives in order to figure out the best response. The thing about fully conversational chatbots is that they are incredibly complicated to build — relying on gargantuan sets of data and a large team of data scientists to train the AI models that underpin them. Yet, despite all of that work, conversational chatbots are limited because the technology that underpins them, a type of AI called natural language processing, is still nascent and very prone to error. This is in contrast to chatbot systems that only use AI to determine the intent of the first message and then hands the conversation over to a simpler pre-programmed chatbot to guide the customer through an automated workflow, for example, to report a credit card as missing and request a replacement. At Helpshift, we’ve found that using AI and automation in a practical way to augment the skills of agents greatly improves the utility of chatbots in customer service given the current limitations of conversational AI.

So beyond trust considerations, it’s technical complexity and its capital intensive creation; how useful is Eno, actually? A good customer service chatbot should maximize utility for both customers and agents by ultimately reducing the friction for customers in accessing support and reducing the volume of issues that agents handle — saving significant amounts of time for both. Any chatbot that isn’t doing these things for a brand and its customers is a missed opportunity to dramatically improve the efficiency and quality of the customer support journey. So in this edition of Bot Wars, we’ll take Eno through its paces to determine where Eno excels and where it could use a little tweaking to truly become a stand-out customer support chatbot.

The sections that follow will provide a summary of features within three broad categories and provide a score for each. Scores are out of 5, so a perfect score is 15. Here’s a scoring rubric for your reference:

| Scoring Rubric | |

| Criteria | Points |

| Awareness of context & proactivity | 5 |

| Ease of use & efficiency | 5 |

| Persona & Empathy | 5 |

| Total | 15 |

2. Awareness of context and proactivity

For chatbots to be helpful, they need to first understand the context of the customer’s issue and then proactively provide them with a path to resolution — whether that be by providing self-help resources or transferring to the right bot or agent that can help resolve the issue.

Note that for this section we did not consider Eno’s push alerts for potentially fraudulent activity as the primary use case we are assessing is how Eno enables the customer support journey. We also didn’t have a way of generating these notifications without making outrageously large purchases on our team member’s credit card.

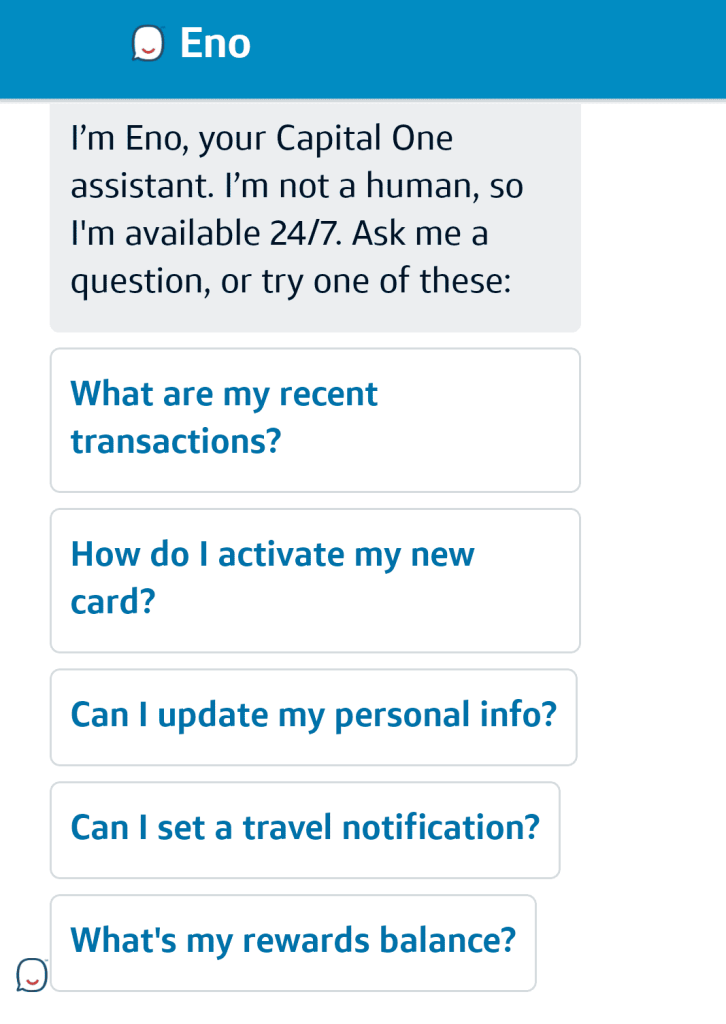

When first entering Eno’s messaging experience, it immediately greets you and explains a bit about how you can interact with it. It also provides several options for the customer to tell it what their issue is about. Additionally, the customer can free-type to describe their issue.

We took the time to test out both options to see how Eno performed.

Eno provided us with the following options upfront:

- What are my recent transactions?

- How do I activate my new card?

- Can I update my personal info?

- Can I set a travel notification?

- What’s my rewards balance?

These options seem to cover the most common questions that customers ask, but Eno doesn’t provide the same options every time. In one test it gave us the option to ask about balance transfers rather than updating personal information. However, It doesn’t appear that the options are personalized based on the user’s most recent online activity. Rather, Eno seems to provide five random options selected from a larger list of common queries.

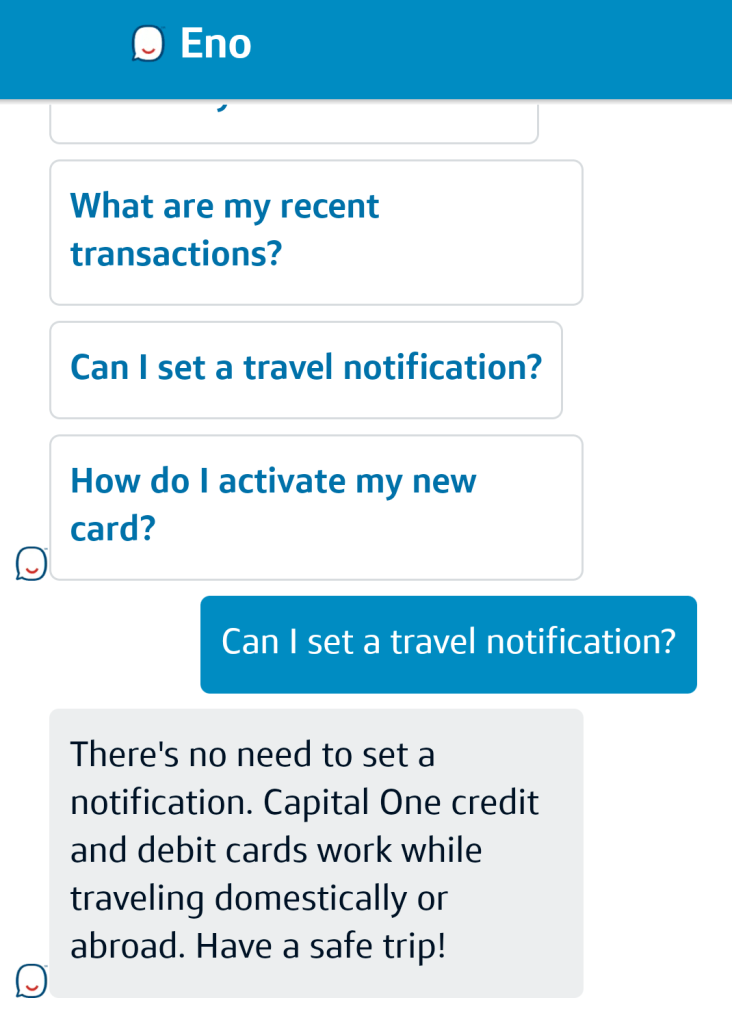

We tested all of the options we were presented with. Eno’s responses were very straightforward. Requesting balance and transaction information surfaced the relevant data. Selecting ‘travel notifications’ returned a message that all Capital One cards can be used abroad without the need to notify them. Other options provided a link to the relevant page where the user could self serve.

We also wanted to test Eno’s much-hyped AI capabilities by free-typing a few queries. These surfaced a few interesting results:

First, a few common questions

We started by asking Eno some questions based on articles in Capital One’s FAQs page for credit card customers, with the assumption that these would be the most likely type of queries Eno would be trained to understand. These questions were:

- How can I change my credit card due date?

- How can I add an authorized user?

- How can I do a balance transfer?

All of these questions resulted in a very straightforward single answer with a link out to the relevant self-service page. So far it seems that the AI capabilities of Eno are pretty good, but from here we want to see how it handles irregular and unique queries.

How Eno handles typos

Typos tend to be a major stumbling block for many conversational chatbots as AI models often can’t understand them. In order to cope with typos, AI models need to be extensively trained with large amounts of data for each query to learn all the common ways someone might ask a particular question — even the ways they might mistype it. So, throwing typos at Eno is a great way to test out the robustness of the AI model and the depth of its training for that particular query.

Capital One boasts about Eno’s ability, especially, to understand the various ways people might ask for their balance — even going as far as training Eno to understand the money-mouth face emoji to request your balance.

We asked Eno for balance information by asking “Can you me my acnt balane,” which you might imagine typing while in a rush. Not only does the question contain significant typos, but also an egregious grammatical error.

We were pleased to see that Eno returned balance information without a hitch, which suggests that Eno can hold its own against rival conversational chatbots that often get tripped up even with a single letter out of place.

The less common questions

Testing typos is a great way to see the depth of a conversational chatbot’s training around a particular query. However, breadth is also important. This is where the limitations of conversational AI come to the foreground, even for comparatively robust systems such as Eno. You can teach an AI model to understand thousands of different ways that customers ask about their balance — but ask it about anything else and it will have no idea what you’re talking about.

In other cases, an AI model might struggle if the question is too complicated. That is, if the query seems to contain multiple intentions, the AI will need to pick the one that seems to be the most accurate — or pick the one that it’s been trained to understand because it doesn’t understand the other one.

For this test we presented Eno with the following two problems:

- I can’t get through the security verification to access my account

- I can’t activate my card for Apple Pay

These questions were inspired by a member of our team who actually had some headaches getting through one of Capital One’s security verifications. In this case, it was to activate his card for Apple Pay. Curiously, the verification was being asked for within the Capital One app even though he was already logged in using his thumbprint. We were interested to see how Eno would handle queries based on these unique circumstances.

In any case, we tried to ask Eno if it could help us get through the Fort-Knox-esque verification process without having to call support.

No such luck…

For the first query, Eno clearly has no idea what we’re talking about. We can’t be sure as to why Eno interpreted the query to be about the CVV code. In any case, a more optimal response would have been to ask for clarification or offer a connection to an agent. Responses can be programmed based on an AI’s confidence in its answer — so perhaps the bigger issue here is not that Eno gave the wrong response, but that it seemingly was very confident in its wrong response. However, we can’t be sure if Capital One has set confidence thresholds for Eno’s responses or to what degree they’ve set them.

For the second query, we seem to have run into the common ‘double intent’ issue that plagues many chatbots. The type of AI that underpins conversational chatbots, called natural language processing, typically breaks down text into ‘intents’ and ‘entities’. The AI first interprets the ‘intent’ of the user’s message (an ‘utterance’ in AI parlance) and then interprets the entities associated with those intents. In our example, the intent is activate and the entities are card and apple pay. But that ultimately means that there are two intents, activate card and activate apple pay.

For Eno to handle double intents, it would need to be trained to do so. To look into this more we first separated the two intentions into ‘activate card’ and ‘activate apple pay’. Eno responded to both the same way, offering us to activate a new card. This lead us to believe that Eno simply doesn’t understand what ‘Apple Pay’ means. When we tested this we found this to be true. Eno interpreted ‘Apple Pay’ to be a request for credit card bill details.

So, clearly Eno was picking ‘activate card’ because that’s the only intention it was able to understand. This lead us to wonder if Eno is able to handle double intents at all, even if it understands them individually. So, we sent Eno a query with two intents that we knew it can handle separately, “I need to change my card due date and add an authorized user”.

Eno only responded to the ‘add authorized user’ intent, which implies that it can only handle one intent at a time.

To be fair, any conversational AI chatbot is going to have these types of issues. The ‘double intent’ problem in natural language processing is one that engineers globally have been working on for years. It’s a tough nut to crack. So, in the end, the above errors have more to do with the current limitations of conversational AI than it does to Eno’s engineering. However, AI solutions, whether for customer support or not, should be implemented in a way that makes the best use of AI’s strengths while working around its weaknesses. For example, when using an AI chatbot for customer support, it’s best practice to give the customer an easy out when the AI or automation fails, typically by enabling customers to chat with a live agent if they need to without switching channels. Yet at no point in working through the above issues were we offered escalation to a support agent, which brings us to the next section.

Helpshift score: 4.0

Overall Eno quickly understood our issues and proactively surfaced relevant information or sources for self-service. Typed queries were generally well understood and it’s clear that Eno has been trained extensively to understand many variations of certain queries. However, it seems that Eno has been trained to understand only a small range of queries and also struggles with complex queries that contain more than one intention.

3. Ease of use & efficiency

Ultimately, customer support chatbots are only useful if they’re easy for customers to use and enable agents to work more effectively. Beyond contextual understanding and proactivity, chatbots need to take a customer down a logical and seamless path to resolution — avoiding bottlenecks, delays or the dreaded frustration loop of ‘sorry, I don’t understand, can you rephrase your question?’ over and over again with no escape. In terms of efficiency, a good bot should waste as little of the customer’s time as possible by providing pertinent information upfront without the customer having to dig around, or it should transfer the customer to an agent if it can’t help.

It’s in this area where there are some missed opportunities. Eno isn’t connected to Capital One’s customer support ecosystem. If you tell it that you don’t understand or that you want to speak to someone it provides you with a link to the ‘contact us’ page which provides a list of phone numbers to call support. Customers are also not able to speak to an agent within the same messaging interface, and in fact, Capital One does not offer any sort of chat or messaging-based support to its customers — or at least, not that we could find. If Eno can’t help you, your only option is the phone.

Additionally, Eno does not lead customers through existing common workflows. Even activating a credit card has to be done on an external page that Eno provides a link to.

That being said, Eno is still useful in a customer support context in that it’s able to surface self-serve options for the most common questions, which means that it likely deflects a large volume of calls related to Credit Cards.

Still, we had to deduct a large number of points as it’s clear that Capital One is missing a big opportunity to further embed Eno into their customer support organization through easy access to live agents and simple automations for common workflows.

Helpshift score: 2.0

Eno is generally easy and intuitive for customers to engage with and it’s able to surface useful information and self-help resources. However, beyond that, Eno is not connected to customer support at all except when it provides a link to a list of phone numbers and has no bot capabilities beyond providing single answers.

4. Persona & empathy

One aspect of chatbots that can make a big impact on the customer experience is their persona and ability to empathize with customers. Of course, chatbots are pre-programmed by human beings, so customer service teams need to make sure that they translate the brand voice and tone over to their chatbots in order to create a consistent experience for customers.

Chatbot personas can range from bland to silly — with some brands employing a mascot as their chatbot, such as an animal. Others try to pass their bot off as a real person, which we don’t recommend. Likewise, how a chatbot responds to customers can also vary, with some brands opting for witty replies while others prefer drier responses. Regardless of the persona and voice a brand chooses for its chatbots, at the very least they should be friendly and employ a helpful and empathetic tone of voice.

Capital One invested a lot of thought into how Eno would be received by customers. Giving Eno a ‘personality’ was integral to helping customers trust the technology. This has lead Capital One to give Eno the ability to deliver witty responses to innocuous questions such as, “Will you marry me?” To which Eno responds, “Nah, I’m a young chatbot and still getting to know little ol’ me.”

You can also ask Eno to tell you a joke, a common gimmick for AI assistants. We decided to test Eno’s sense of humor:

Beyond its parlor tricks, Eno’s personality is upbeat. If you consider Capital One’s brand image, this makes sense (What’s in your wallet?). Responses are peppered with cheery phrases — “Happy to Help!”; “Understood!”; “You got a new card!” And it’s hard to miss that the icon that represents Eno is simply a chat bubble with a smile. Eno is literally all smiles.

Overall, these features make Eno a pleasant chatbot to engage with, which is possibly why customers apparently love it despite its limited use for actual customer support.

Helpshift score – 5.0

Eno is a lovely chatbot to interact with. It might not be able to get you in touch with an agent, but if you’re bored or lonely, you can certainly amuse yourself for a few minutes shooting the breeze with Eno.

5. The verdict

Overall score – 11/15

We gave Eno an overall score of 11 out of 15. It’s important to note that our analysis is specifically based on a customer support use case. At the end of the day, Eno is an QuickSearch Bot. That means that all Eno can do is respond to questions with a single answer. If we were judging Eno purely on that, we could give it a much higher score. But for a chatbot to really succeed as a customer support tool, it needs to also provide seamless connections to other bots that can guide customers through common workflows or to an agent if needed.

One could argue that customer support was not top of mind for Capital One in developing Eno. Indeed, Eno mostly functions as a more straightforward way to interact with the website and also gives Capital One a certain amount of street cred in the AI space. That being said, customer expectations are important, and as Eno is labeled on the menu as ‘Help,’ customers rightly expect that button to be the gateway to an end to end customer support experience — but it’s not.

Here’s a quick breakdown:

| Score Breakdown | |

| Criteria | Score |

| Awareness of context & proactivity | 4 |

| Ease of use & efficiency | 2 |

| Persona & Empathy | 5 |

| Total | 11/15 |

Strengths – Eno is great as an QuickSearch Bot. It responds well to most simple queries and provides useful information. Eno has a very engaging personality that matches well with the Capital One brand.

Weaknesses – Eno does not enable end to end customer support. There is no ability to transfer seamlessly to an agent and there are no other bots that are part of the experience that can guide users through common workflows.

We hope you’ve found this Bot Wars battle useful in understanding what a great chatbot experience should look like. Stay tuned for the next installment of Bot Wars!